Perverse, puzzling, and unlikely, the “trolley problem” is perhaps the best known modern thought experiment — a fixture in many an introductory ethics or philosophy class. The question it poses is how to stop a runaway train car: Do nothing and kill five people on the track, or pull a lever to switch to a different track and kill just one person?

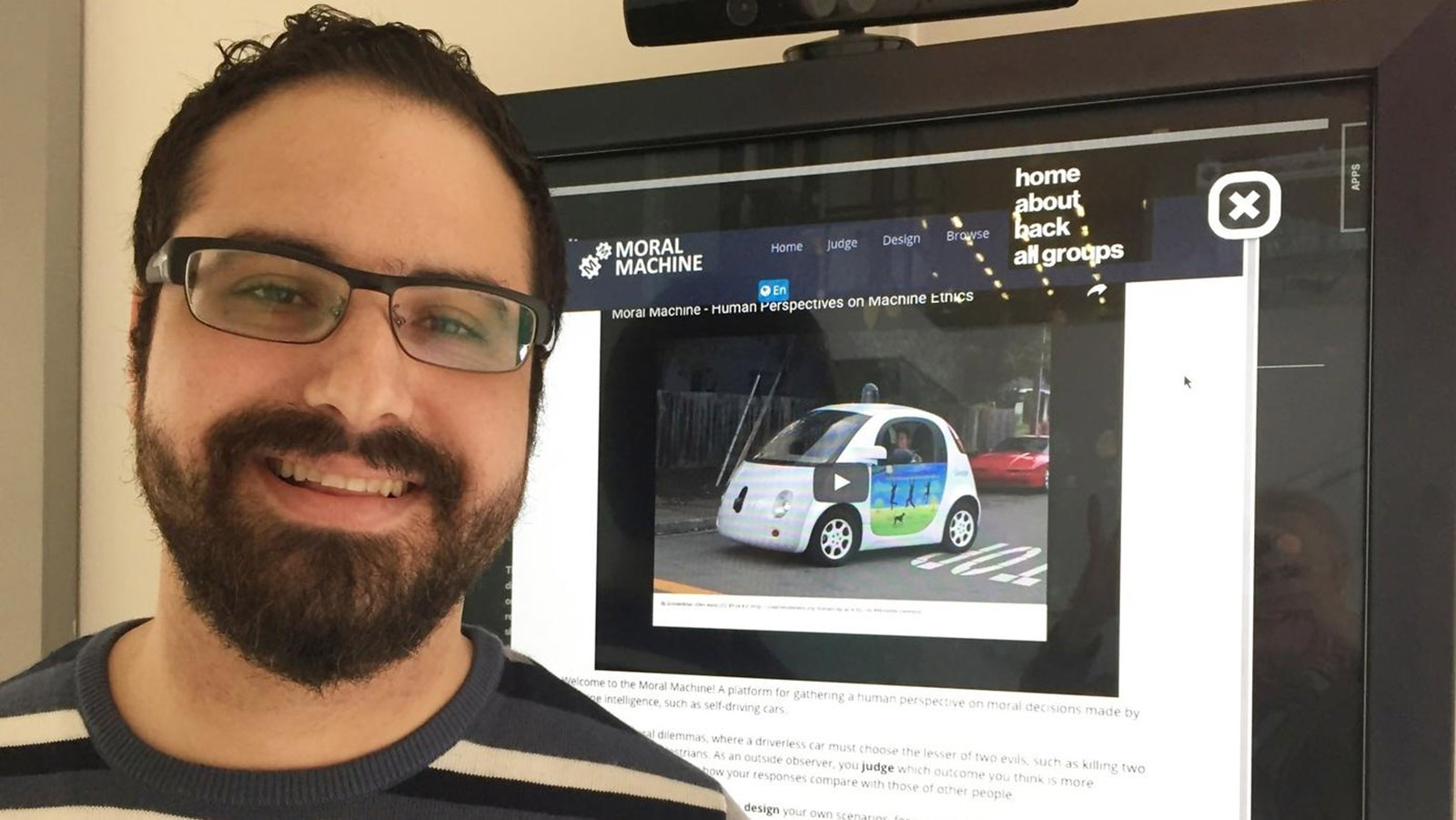

As a postdoctoral associate in the Scalable Cooperation group at MIT Media Lab, Edmond Awad helped to develop an updated trolley problem: the moral dilemmas facing the designers of driverless cars, which could well be a dominant mode of transportation in coming years. Awad’s research on a project called Moral Machine was the topic of the KSJ fellows’ November 14 seminar.

“Driverless cars, as you all know, are already on the roads,” said Awad. “Which is great, because experts say that these cars will be decreasing 90 percent of the accidents that we have today. For the other 10 percent, some of them might involve the car facing moral dilemmas and having to force fatalities.”

Studies have shown a discrepancy between what individuals say driverless cars should do and what kind of car they would actually purchase. Drivers tend to say that they want cars to protect pedestrians, but also that they would not buy such a vehicle themselves.

To collect vast amounts of data on human perspectives about such decisions, Awad and his team launched the Moral Machine website, in which visitors play an interactive game that presents them with a choice of two decisions in a variety of randomly generated crash scenarios. As in the trolley problem, the visitor must choose to swerve or stay the course, sacrificing either the people in the car or one group of pedestrians to save other pedestrians.

Compounding the choices, the game presents players with different types of pedestrians and vehicle occupants. “We have the elderly,” Awad said. “We have executives. We have doctors, athletes, a pregnant woman, a homeless person, criminal, a baby, dog, and a cat.”

After visitors complete the game, they can submit their results as well as some details including their home country, age, and gender. There is also a section in which visitors can build their own scenarios, and a forum in which people can discuss their reasoning with other participants.

“We had four million users visit the website,” Awad said. “Three million of those actually completed the decision-making task, and they clicked on 37 million individual decisions. There’s also the survey that comes after, which is a little bit more work, and we still have over half a million survey responses.” The Scalable Cooperation group plans to publish the full results of the study in an upcoming paper.

The talk spurred a discussion that was lively even by KSJ standards. Fellow Teresa Carr asked Awad about the reasoning behind the character attributes included in the scenarios. “Where do you draw the line between which traits to include?” Carr said. “Because if you get into things like gender or social status, what’s to stop people from including more problematic things like race?”

Awad replied that the Moral Machine team included two psychologists and two anthropologists, who determined the attributes based on their inclusion in previous psychological studies in human decision-making.

Another fellow, Mićo Tatalović, asked about the Moral Machine’s algorithms: “Are you talking about programing them to match who you are and what your values are, or what you would like your values to be?”

Tatalović added that “in the teaching of a deep learning machine, you are also imparting your own biases into it, whether or not you’re aware of that or not. And so if you’re a racist, for instance, you are imparting into the AI machine that that is a clean slate to begin with, but you are also imparting your racist bias into it as well.”

Awad replied that the project aims “to promote the discussion about the kind of moral principles that should be considered in driverless cars. One goal was to collect data. The other goal is to continue a discussion, and that’s why we had part of the website where people could design their own moral dilemmas, and then they could see what other people design, and they could discuss that.”

Jane Qiu asked Awad about how the results of the study would be presented so they were not misinterpreted by legislators and used to directly inform driverless car policy.

Awad said the study was intended as the beginning of a discussion on decision-making in driverless cars, not the end of it. By gathering “evidence for human cognitive processes that are happening with respect to driverless cars,” he said, “then maybe policymakers could use it to determine what they should study more. This could be useful.”

On emerging technology like driverless cars, “there needs to be a common body of data,” said Fellow Joshua Hatch, “and a common way that everybody is looking at things. … What we really need to have is a collection of what are all the questions that we really need to ask.”

The discussion continued for half an hour beyond the scheduled seminar time. Afterward, Awad said: “It was the toughest audience I have ever presented to, but it was a great experience. I learned a lot from the comments and the suggestions, and I now better understand what concerns may arise, given the insightful questions.”

Leave a Reply