The Knight Science Journalism program at MIT took a close look at one of the profession’s most underappreciated practices — and uncovered a few surprises.

At some science news publications, a fact checker could get fired for reading a quote verbatim to a source. At others, it’s standard practice. Still other publications have no formal fact checkers at all. So reports a new study coordinated by the Knight Science Journalism Program at MIT, “The State of Fact Checking in Science Journalism,” one of the first industry-wide looks at how science news publications go about ensuring the trustworthiness of their reporting. A key takeaway: Different outlets approach the task in vastly different ways.

The study was funded by a grant from the Gordon and Betty Moore Foundation. The project was overseen by Knight Science Journalism Program director Deborah Blum, and spearheaded by Brooke Borel, a freelance journalist and editor. Borel notes that, although fact-checking is widely regarded as an essential part of science journalism, “we didn’t have hard data showing how it typically works or what sorts of resources journalists and outlets wish they had in order to improve it. The point of the report was to pull that data together through surveys and interviews.”

Borel and her team conducted more than 300 surveys and more than 90 interviews of editors, journalists, fact-checkers, and journalism-school faculty to gather various perspectives on fact-checking policies and procedures. The study focused not on the watchdog efforts of political fact-checkers like PolitiFact or FactCheck.org, but rather on the in-house quality control that publications do before a story goes to print — a practice known as editorial fact checking. Survey questions ranged from who does the fact-checking to how much are they paid. The study encompassed nearly 80 English language publications, including some outlets from African, Asian, and European countries.

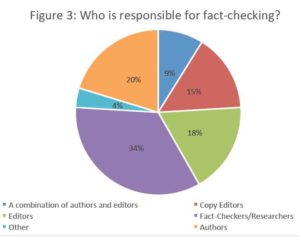

So, what is the state of fact checking? The report seems to confirm at least one long-held suspicion: that support for fact-checking is waning. Only about a third of the publications in the study employ independent fact checkers. About 15% said they rely on copy editors for fact checking. Others place the onus on journalists and editors, and about a third have no formal fact-checking procedures in place at all.

But the study also produced some surprises. For instance, digital publications were just as likely as print outlets to use formal fact checking, a finding that runs counter to the popular narrative that the decline of fact-checking is linked to the rise of digital publishing. The study suggests that the divide is not so much between print and digital, but between news and longform: Publications seem more willing to invest in thorough fact checks for long narrative features than they are for short, breaking-news dispatches.

Borel says she was also struck to learn that “the hourly rate for fact-checkers is all over the place.” Some fact checkers reported earning $19 an hour; others four times that much. Some outlets paid as little as $15 an hour. On average, fact-checkers who participated in the study earned about $30.

The report lists several recommendations for improving and standardizing fact-checking across the profession, including:

- encouraging all outlets to put in place formal processes to catch errors before they print;

- encouraging publications to provide regular training and refresher courses for new and longtime staff;

- prioritizing fact checking of longform journalism at outlets where it would be impractical to independently fact check all stories; and

- creating a fact-checking cooperative, where outlets that can’t afford to pay full-time fact-checkers could hire checkers on a subscription or per-project basis.

The Gordon and Betty Moore foundation sees the study as especially timely, given the current climate of heightened media scrutiny. “Science journalists are not only tasked with delivering relevant stories to readers, but they face the added challenge of decoding and translating dense, jargon-filled research,” reads a statement from the foundation. “Increased resources and processes to ensure accuracy of science stories would be immensely helpful to the field.”

Read the entire report here.

“The State of Fact-Checking in Science Journalism” was produced by Brooke Borel (author and project coordinator), Knvul Sheikh (researcher), Fatima Husain (researcher), Ashley Junger (researcher), Erin Biba (fact-checker), and Deborah Blum and Bettina Urcuioli of the Knight Science Journalism Program at MIT. The report was made possible by a generous grant from the Gordon and Betty Moore Foundation to the Knight Science Journalism Program at MIT. Particular thanks are due to Holly Potter, Chief Communications Office at the Moore Foundation, for her support of both the project and her commitment to accuracy in reporting on science.

[…] editor at Undark and author of The Chicago Guide to Fact-Checking, to compile some. The result is a report released last week called “The State of Fact-Checking in Science Journalism,” a […]